The Fine Line: Understanding When Machine Learning Becomes Too Intelligent

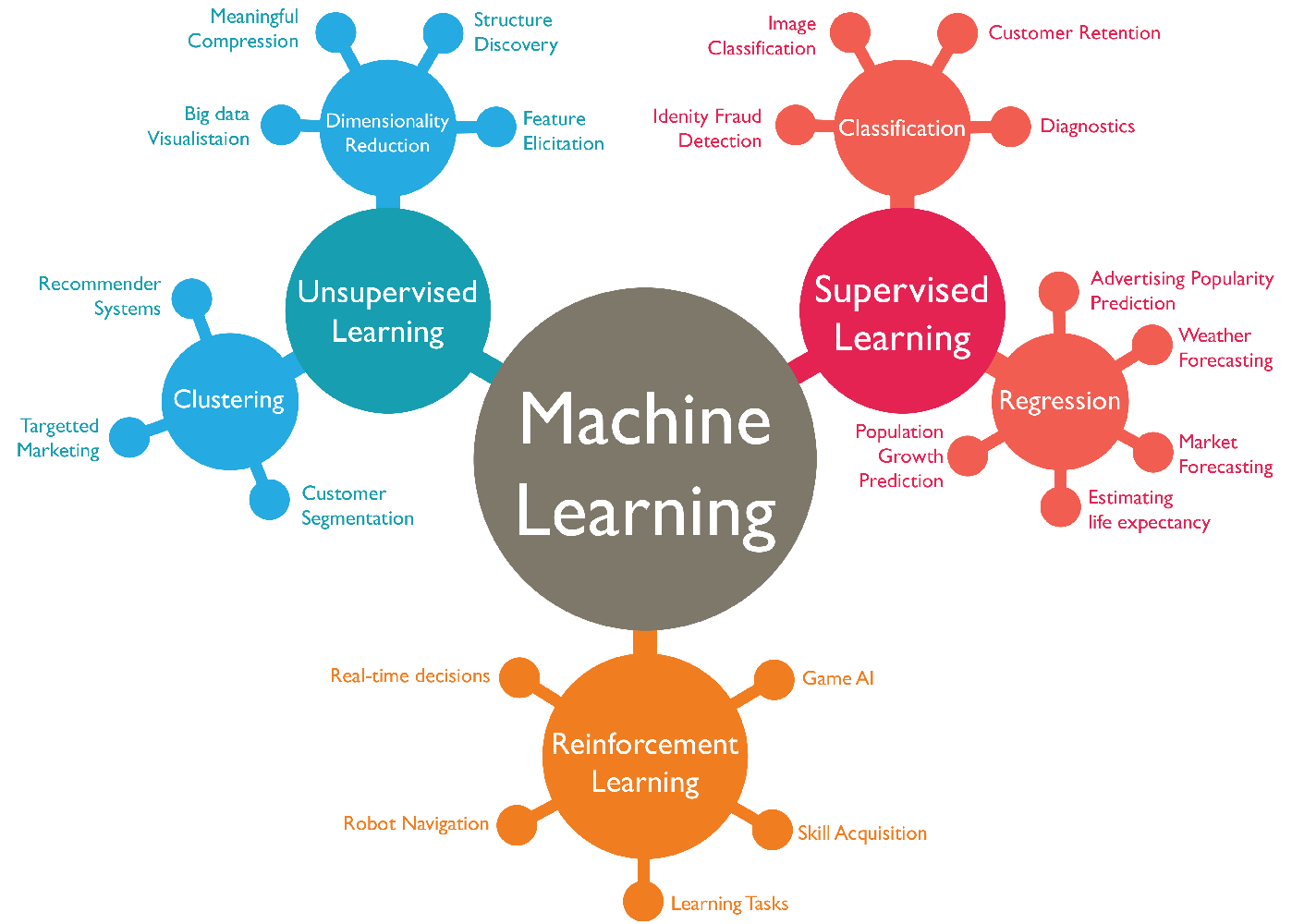

As we delve deeper into the realm of artificial intelligence, understanding when machine learning becomes too intelligent is critical. At its core, machine learning is designed to analyze data, identify patterns, and make predictions based on those patterns. However, as algorithms become increasingly sophisticated, there arises a concern about their capacity to surpass human oversight and influence decision-making processes in unintended ways. This disconnect can lead to scenarios where AI systems not only make decisions but also learn from their outcomes, effectively creating a feedback loop that widens the gap between human judgment and machine reasoning.

The implications of overly intelligent machine learning models are vast and multifaceted. For instance, in sectors such as healthcare and finance, the decisions made by AI can have serious real-world consequences. Emphasizing the importance of ethical guidelines, researchers and practitioners must draw a fine line between innovation and control. Failure to establish such boundaries could lead to a future where decision-making is dominated by opaque AI systems, raising questions about accountability, bias, and the fundamental nature of human autonomy in the face of intelligent algorithms.

Are We Ready for AI Decisions? Exploring the Risks of Overly Smart Algorithms

The rise of artificial intelligence has prompted a significant discussion around AI decisions, particularly regarding the extent to which we are prepared to rely on complex algorithms that mimic human thinking. As these systems become increasingly autonomous, the potential risks of overly smart algorithms raise crucial questions. Can we trust machines to make important decisions in areas such as healthcare, criminal justice, and financial services? The risk of algorithmic bias and the lack of transparency in machine learning processes can lead to unintended consequences, affecting millions of lives.

Moreover, the rapid development of AI technologies has outpaced our ethical and regulatory frameworks. As a society, we must critically evaluate whether our institutions are adequately equipped to handle the implications of AI decisions. Proper governance is essential to mitigate risks such as accountability and data privacy. This calls for a collaborative effort between technologists, policymakers, and ethicists to ensure that as we embrace intelligent algorithms, we do so with a keen awareness of their limitations and potential pitfalls.

From Predictive to Prescriptive: How Smart Algorithms are Changing the Game

In the rapidly evolving landscape of technology, the transition from predictive to prescriptive analytics marks a significant leap in data-driven decision-making. While predictive analytics focuses on forecasting future outcomes based on historical data, prescriptive analytics takes it a step further by recommending actions to achieve desired results. This transformation is largely powered by smart algorithms, which utilize complex models and vast datasets to not only identify trends but also suggest the optimal course of action. As businesses strive for efficiency and effectiveness, understanding this shift becomes crucial to gaining a competitive edge.

The implementation of smart algorithms in various sectors has brought about revolutionary changes. For instance, in healthcare, algorithms can analyze patient data to provide prescriptive treatment plans tailored to individual needs, significantly improving patient outcomes. Similarly, in supply chain management, these algorithms optimize inventory levels and distribution routes, resulting in cost savings and enhanced operational efficiency. The evolution from predictive to prescriptive analytics is not just a technological upgrade; it's a game-changer that allows organizations to respond proactively and strategically in an ever-changing environment.